Tuning PID Controllers with Automatic Differentiation

PID controllers are the workhorses of industrial control. They are simple enough to fit on a microcontroller, yet surprisingly effective across a wide range of systems. The catch is that tuning them well takes time and — if you are being honest — a fair amount of intuition. Classic recipes like Ziegler-Nichols are fast but often leave performance on the table. Model-free autotuning approaches work but can require live experiments on the real plant.

Here is a different take: write down a differentiable simulation of your system, define a loss that captures what “good control” means to you, and let gradient descent find the gains. Automatic differentiation handles the calculus; you just specify the objective.

This post walks through exactly that, using JAX.

The Example: A Second-Order Mass-Spring-Damper

The plant is a linear mass-spring-damper:

with mass , damping , and spring stiffness . The PID controller drives the position to a unit step setpoint:

This system has a natural frequency of and a damping ratio of , so the open-loop step response is lightly damped and oscillatory — a good stress test for the tuning method.

Differentiating Through a Simulation

The key idea is to discretize the system with a fixed time step and write the entire rollout as a JAX function. Since every arithmetic operation in the loop is tracked by JAX’s tracing mechanism, you get exact gradients of any scalar loss with respect to the PID gains for free.

Derivative-on-measurement

One subtlety worth flagging upfront: the derivative term is applied to the measurement rather than the error:

The sign flip on is intentional. To see why it matters, consider what happens at with derivative-on-error instead. The setpoint is a unit step, so the error jumps discontinuously from to in the very first time step. The finite-difference derivative is then , and the control signal spikes to regardless of the magnitude of . With the optimised found below, that spike would be on top of the proportional term — something no real actuator can tolerate.

With derivative-on-measurement, this problem disappears. At the system starts at rest: , so the derivative contribution is , and the first control output is simply . Subsequent samples see a non-zero but finite , so the damping action builds up smoothly. This is standard practice in industrial PID implementations.

Discrete update equations

The integrator is semi-implicit Euler (symplectic Euler): velocity is updated first using the current acceleration, then position is advanced using the updated velocity. This is slightly more stable than explicit Euler for oscillatory systems at the same step size .

The complete update for state and integral accumulator is:

Note that uses (already updated), not .

The Loss Function

The objective combines four terms:

where and .

Weighted tracking is the dominant term. The quadratic time-weighting goes from at to at , so errors near the end of the simulation are penalised six times more than early-transient errors. This is similar in spirit to ITAE but uses a quadratic ramp normalised by the horizon length rather than a linear ramp in absolute time.

Tail penalty adds a hard push toward zero once the error is larger than . It acts as a deadband: below the threshold the gradient from this term vanishes, preventing the optimiser from over-tightening in the noise-free simulation.

Effort penalises large control signals with a small coefficient (), discouraging unnecessarily aggressive gains.

Integral penalty discourages large integral accumulation, which indirectly limits integral windup.

The weighting scheme means the optimizer cannot plateau with a residual steady-state offset: as long as the error at late times is non-zero, the gradient from the weighted tracking term remains large, and the gradient from the tail term adds a constant push on top.

Why JAX?

JAX’s jit + grad pipeline means the gradient computation costs roughly the same as two forward passes — that is the magic of reverse-mode AD. With jax.lax.scan you can unroll the simulation loop without materialising every intermediate in Python, keeping compilation time and memory under control even for long horizons.

There are no finite-difference approximations and no symbolic manipulations. The gradients are numerically exact (up to floating point) regardless of the complexity of the integrator or the loss.

Results

The optimisation starts from — a pure proportional controller — and runs Adam for 3000 iterations with a cosine-decaying learning rate starting at . The decay is important: without it the gains could grow indefinitely, since larger gains always reduce the time-weighted tracking error further. The cosine schedule lets the optimiser move fast early and then freeze the gains as the learning rate approaches zero.

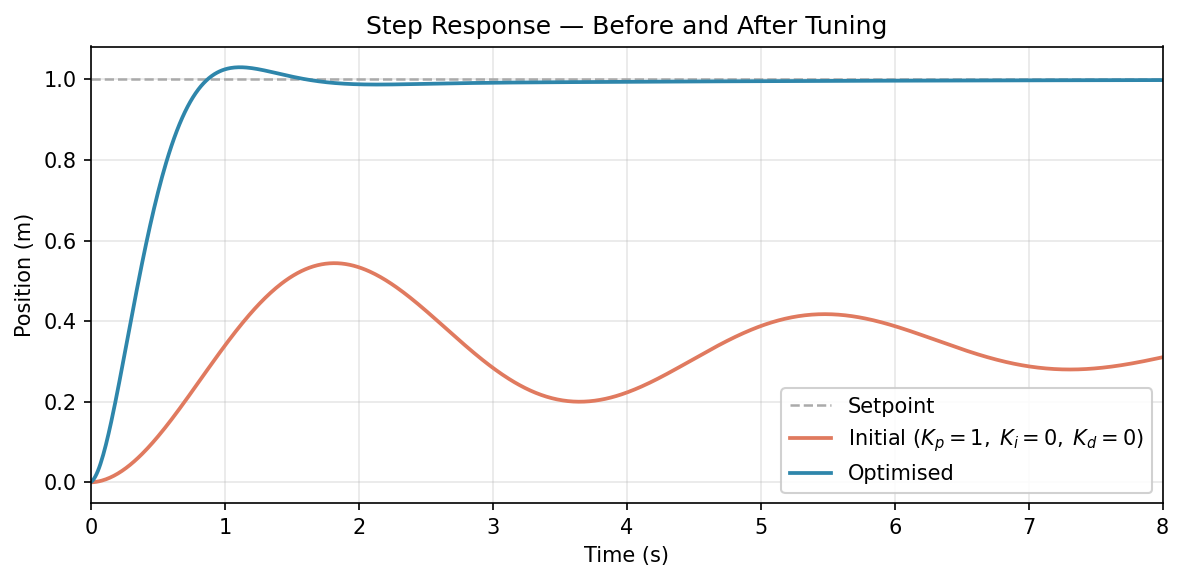

Step Response Comparison

The figure below compares the step response over the 8-second simulation window.

Orange is the initial P-only controller (). It never reaches the setpoint. This is expected: for a spring-mass-damper with a proportional controller the steady-state position is , leaving a permanent offset of .

Blue is the optimised controller. It reaches and stays there. The transient has a mild overshoot followed by a few damped oscillations — expected for a plant with — but they decay and the response settles cleanly within the 8-second window.

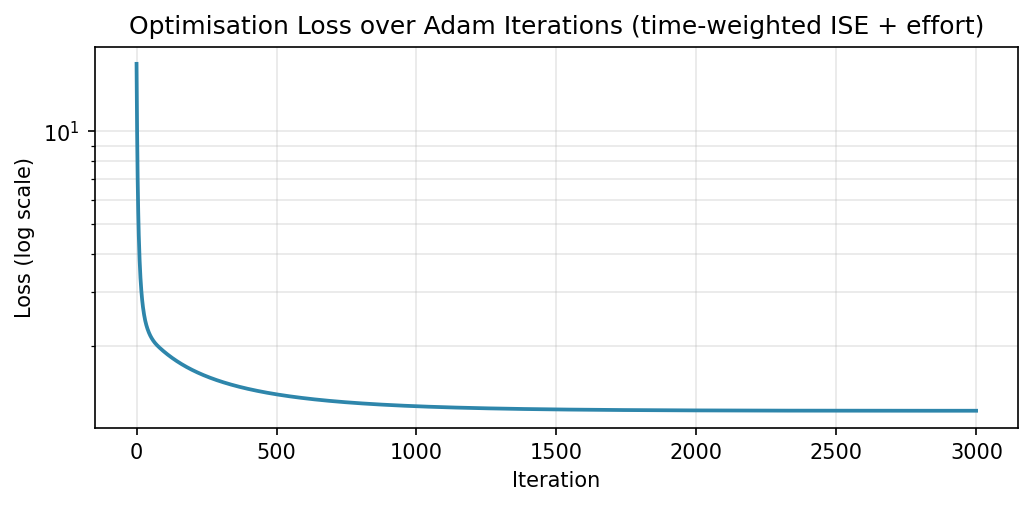

Loss Curve

The loss drops by almost three orders of magnitude over 3000 iterations.

The vertical axis is on a log scale. The steepest descent happens in the first few hundred iterations when the learning rate is still near its peak value (). After roughly iteration 1000 the cosine schedule has reduced the learning rate significantly and the curve flattens, indicating that the gains are no longer moving much. The final plateau is where the optimiser has converged.

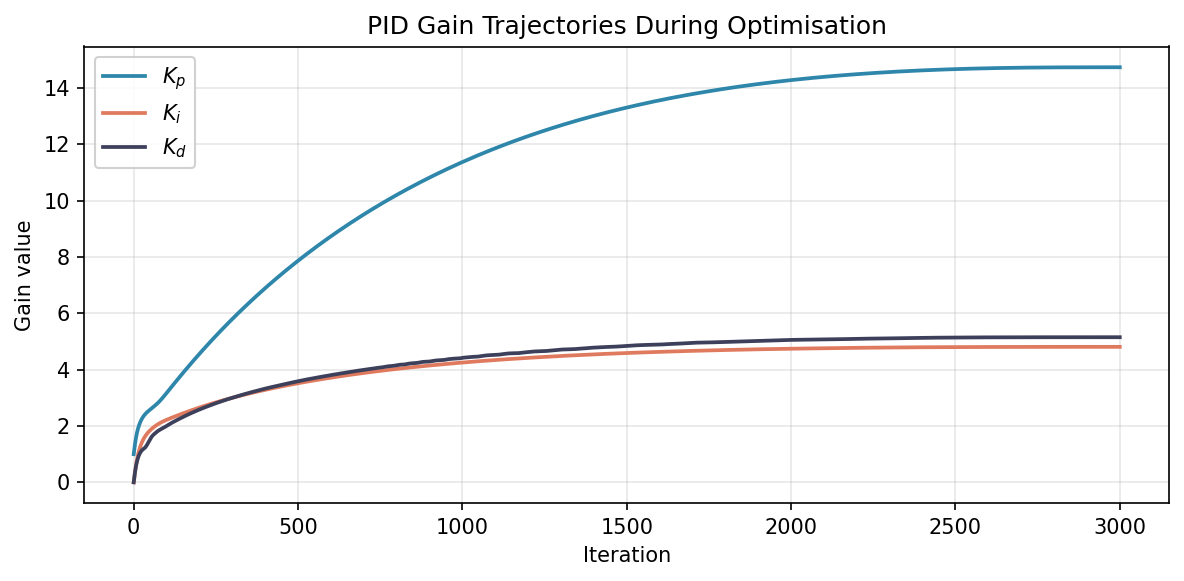

Gain Trajectories

This is the most instructive plot: watching the gains evolve reveals what the gradient is actually discovering.

(blue) and (green) respond immediately in the first few dozen iterations — they act on the current error and current velocity respectively, so their gradient signal is strong and direct. (orange) lags because its effect accumulates over the entire trajectory; the gradient signal reaching it is more diffuse and takes longer to build up.

All three curves flatten as the cosine schedule drives the learning rate toward zero — a clean visual signature of convergence rather than a hard stop. The final values are roughly , , . Nobody specified these values; the optimiser discovered them by minimising the loss.

Tracking Error

The clearest way to see the steady-state improvement is to look at the error signal directly.

![]()

Orange (initial, ): the error quickly settles to and remains there for the rest of the simulation. This is not a transient — it is a permanent steady-state error that can never be eliminated by proportional action alone when a spring load is present.

Blue (optimised): the error oscillates during the transient (positive overshoot around , a small negative dip around ), then decays to zero. The oscillations are consistent with the plant’s low damping ratio. Gradient descent, given a loss that penalises late-time errors heavily, figured out on its own that integral action is the right tool for eliminating steady-state error — because without the time-weighted loss is unavoidably large.

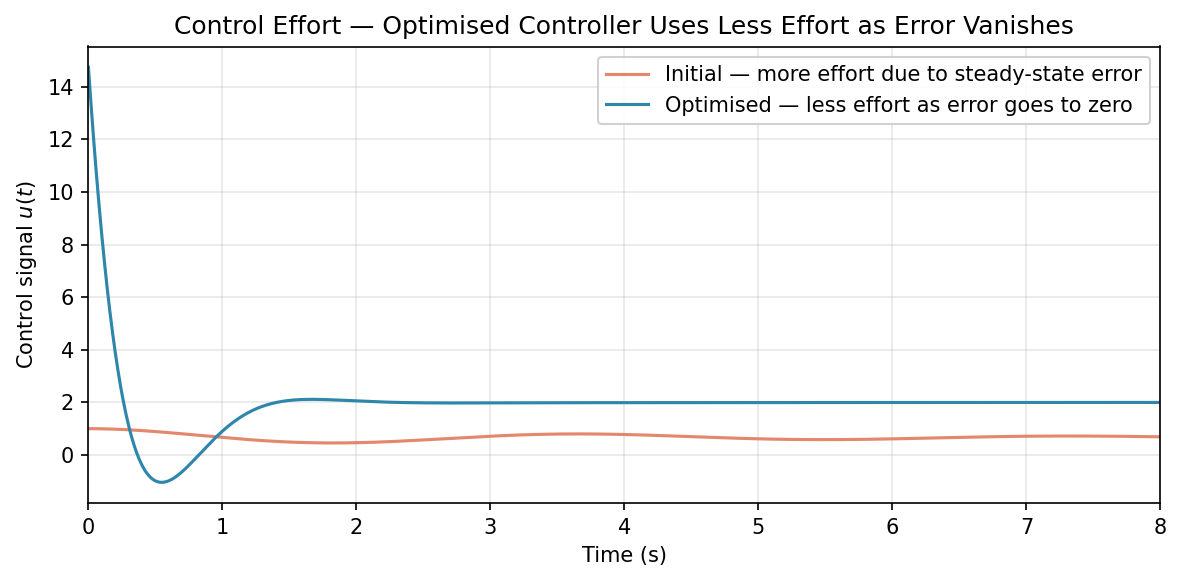

Control Effort

Orange (initial, P-only): the control signal starts at and oscillates before settling to — the force the spring exerts at the steady-state offset position.

Blue (optimised): the first sample is (pure proportional kick; integral and derivative are both zero at since and ). The signal then dips negative around : this is the derivative braking term pulling back as the mass moves toward the setpoint at its peak velocity. After the transient the signal settles to approximately , which is the spring force needed to hold the mass at against the spring.

There is no large spike at in the optimised controller’s signal. This is a direct consequence of using derivative-on-measurement: since , the derivative term contributes nothing at the first step. Had we used derivative-on-error, the step discontinuity in at would have produced a spike of approximately on top of the proportional kick.

Practical Considerations

Simulation fidelity. Gradient-based tuning is only as good as your model. If the simulation drifts significantly from the real plant, the optimal gains may not transfer. Sim-to-real gap is the main risk.

Numerical stability. Long rollout horizons can cause gradient magnitudes to grow or shrink exponentially — the same instability that plagues RNN training. For stiff systems or long horizons, consider using a smaller step size, a more stable integrator (e.g. RK4), or gradient clipping.

Local minima. The closed-loop simulation loss is generally non-convex in the gains. In practice, the basin of attraction for reasonable initial guesses is large for common plant types, but it is worth running from several starting points to build confidence.

Nonlinear plants. The approach extends directly to nonlinear systems — just swap the linear update for your actual model equations. AD does not care whether the dynamics are linear or not.

Takeaways

- You can treat PID gain tuning as a standard gradient-based optimisation problem by differentiating through a closed-loop simulation.

- JAX makes this straightforward: write the rollout, define the loss, call

jax.grad. - The choice of loss matters. A quadratic time-weighting combined with a tail penalty keeps the gradient alive for late-time errors, naturally suppressing steady-state error and sustained ringing. Plain ISE can plateau with a residual offset because the gradient gets small as the error shrinks.

- Gradient descent discovers control structure. Starting from a P-only controller with , the optimiser finds on its own that integral action is needed — because without it the time-weighted loss is unavoidably large.

- Derivative-on-measurement eliminates the actuator spike that would otherwise appear at when the setpoint steps. The gain is quantitative: with and , the spike avoided is roughly .

- The main assumption is that you have a reasonable simulation of your plant. If you do, this approach is fast, flexible, and easy to extend to more complex controllers or multi-loop architectures.

The Python script used to generate all plots is available if you want to run the experiments yourself.